Research

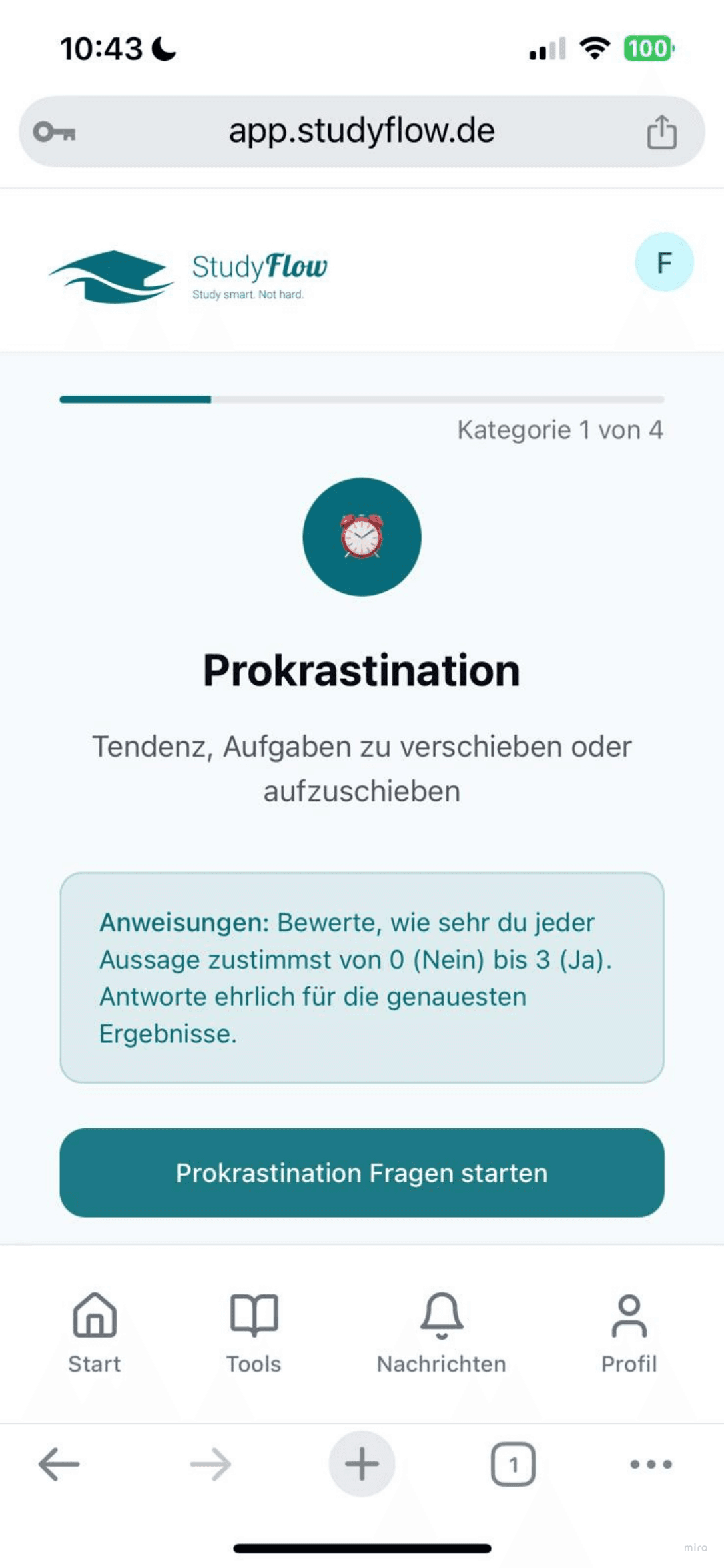

StudyFlow digitized a live coaching method developed by a psychology professor, transforming weekly group sessions into a self-guided app with diagnostic tools, learning methodologies, and a chatbot replacing the professor's guidance. When I joined, early signals suggested the product was on track.

.

When I joined StudyFlow, they already had an MVP and conducted an

alpha test

Feedback

41 sign-ups and positive user feedback from the alpha test. On the surface, things looked promising.

My role

Plan and conduct a heuristic evaluation and usability testing to identify UX improvement opportunities.

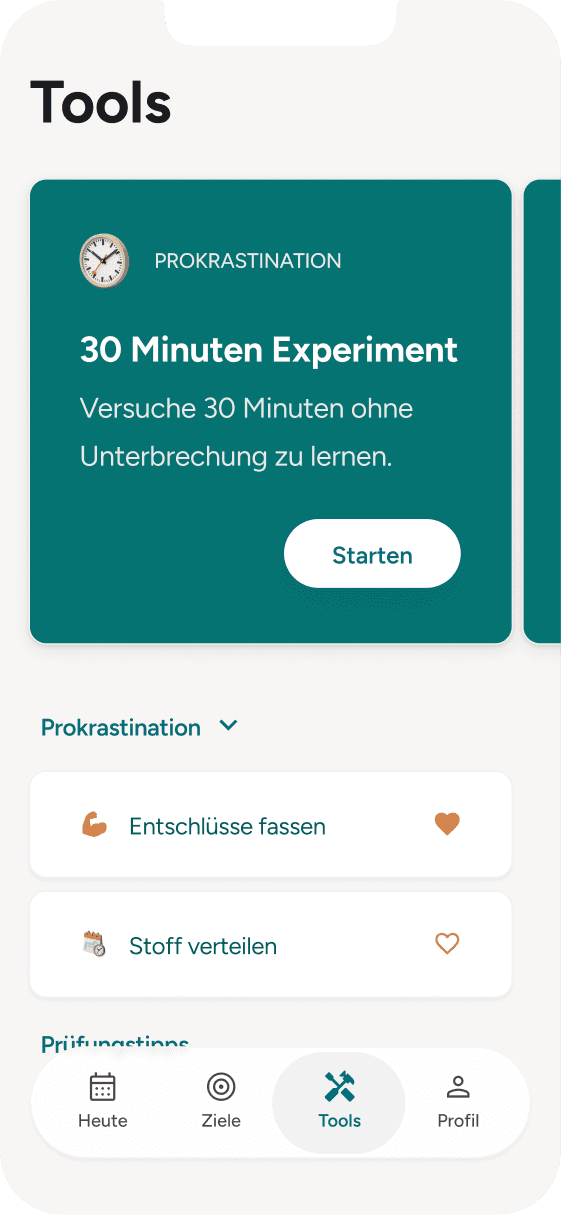

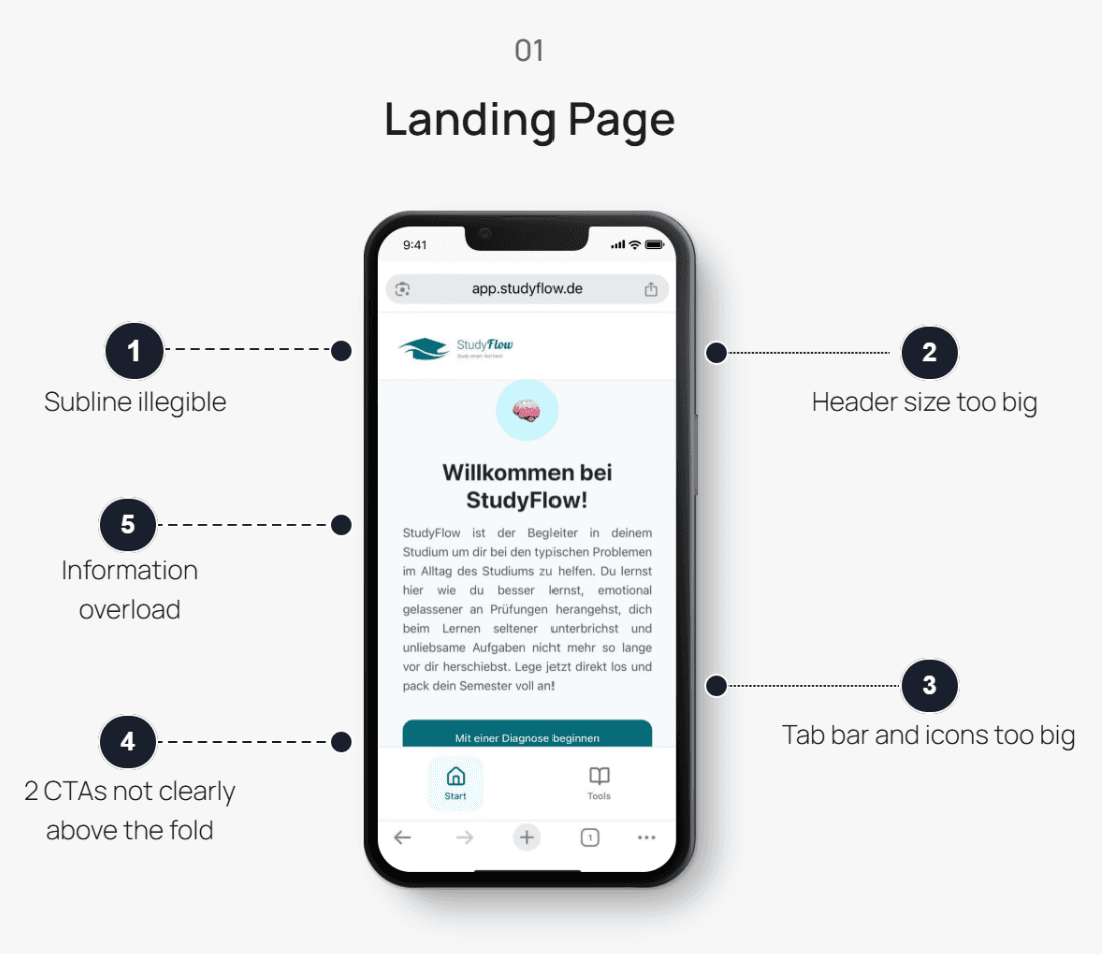

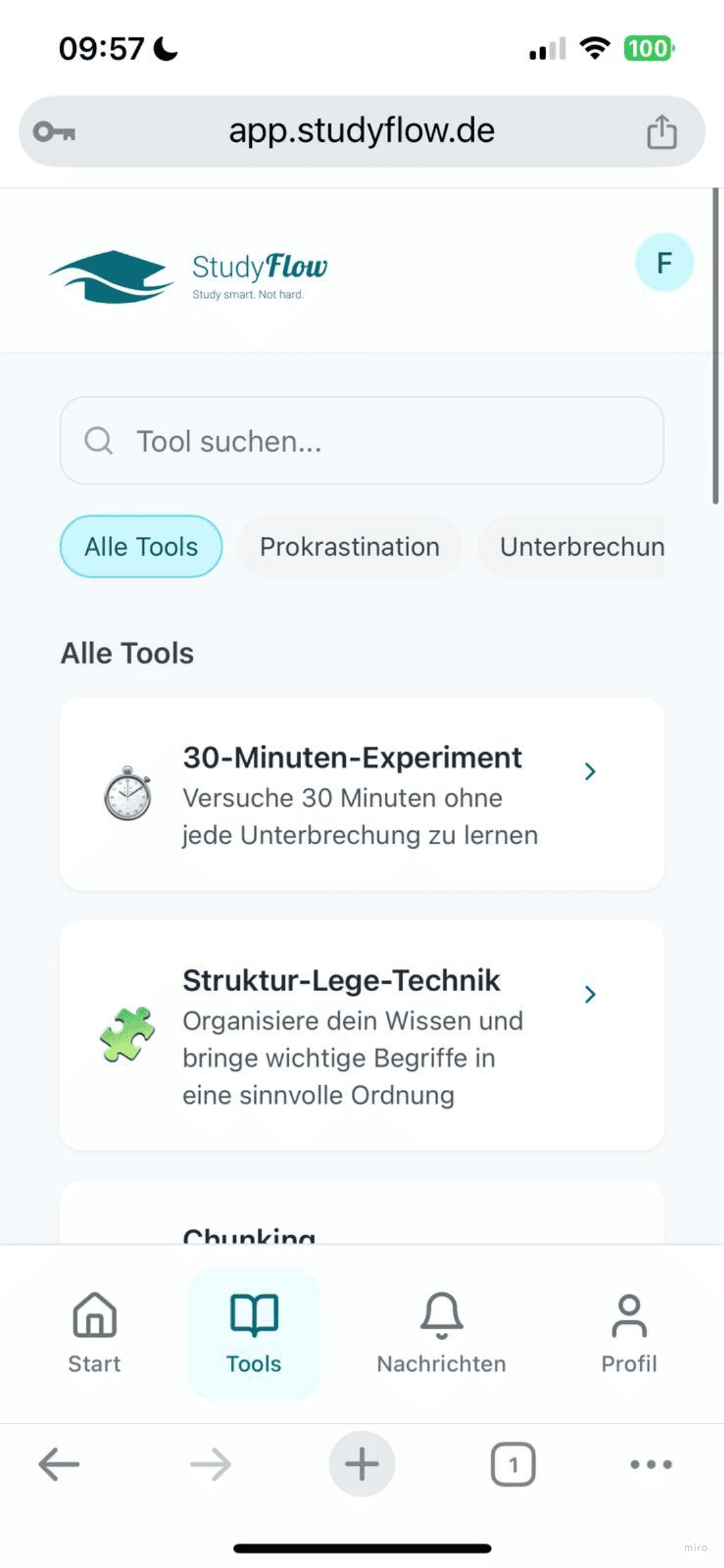

I ran a heuristic evaluation (Nielsen's 10) to identify usability issues systematically. The most critical violation was Aesthetic and Minimalist Design: the app presented 15+ tools at once with no clear starting point, making it feel like a textbook rather than a coach.

I conducted a heuristic evaluation to identify specific usability issues.

The heuristic evaluation surfaced visual issues, but not the behavior behind the churn. I ran 5 moderated usability sessions to understand how users moved through the app and where they lost motivation.

Testing Goals

Methodology

CTA

Text

Registration

Retention

Some issues that users worked around in testing were still valid heuristic violations. Users skimming long copy or interpreting a prefilled field as a placeholder does not mean those patterns are acceptable. A workaround is not the same as good design.

One assumption was genuinely revised: I had initially questioned the value of one CTA, but several users actively used it. This changed how I thought about the tool discovery flow.

Insights

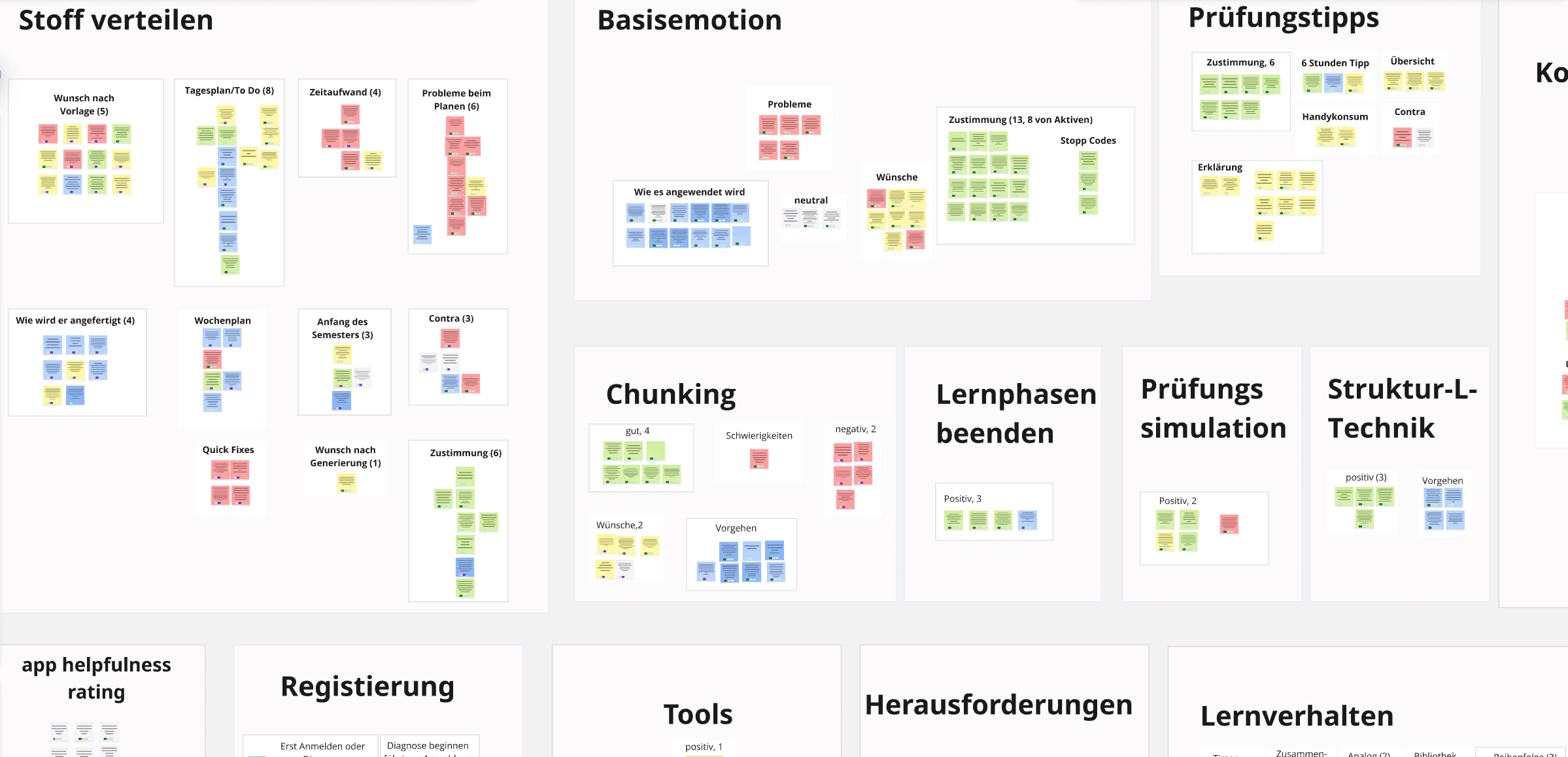

The usability sessions pointed to a retention problem: users completed the core flow but showed little reason to return. Yet the alpha test reported 41 signups and positive feedback. I presented these findings to the team and decided to look closer at the backend data to understand the gap. I mapped all feedback into an affinity diagram to surface patterns across the 41 signups."

I had experienced Professor Wahl's live coaching firsthand and knew it worked. But I had never observed or analyzed it systematically. To understand what made it effective, and what might be missing in the digital translation, I attended the live sessions and observed while taking notes. I then interviewed 7 users from the alpha test to understand their motivation, which tools they tried, and what made them stop

To understand what the app was missing, I attended live coaching sessions and interviewed participants using the same structure: motivation, tool usage, and what kept them coming back.

The research revealed a fundamental gap: the live coaching and digital app teach the same methods but produce opposite results. Why?

The app carried over the methods but lost the conditions that made them work: the tools felt passive, the guidance didn't adapt, and there was no social dimension to drive accountability.

Strategy

High Retention

Accountability

Integrate progress tracking or social features replicating human connection

Progressive Pacing

Mirror live coaching’s one method-per-week introduction to prevent overwhelm

Integrated Execution

Enable in-app task planning, preventing external workarounds that disconnect users

Behavioral Nudges

Implement streaks and achievements to replace self-directed structure

Each direction would require prototyping and testing to validate, so for the near-term iteration I delivered annotated wireframes focusing on usability improvements that could ship immediately.

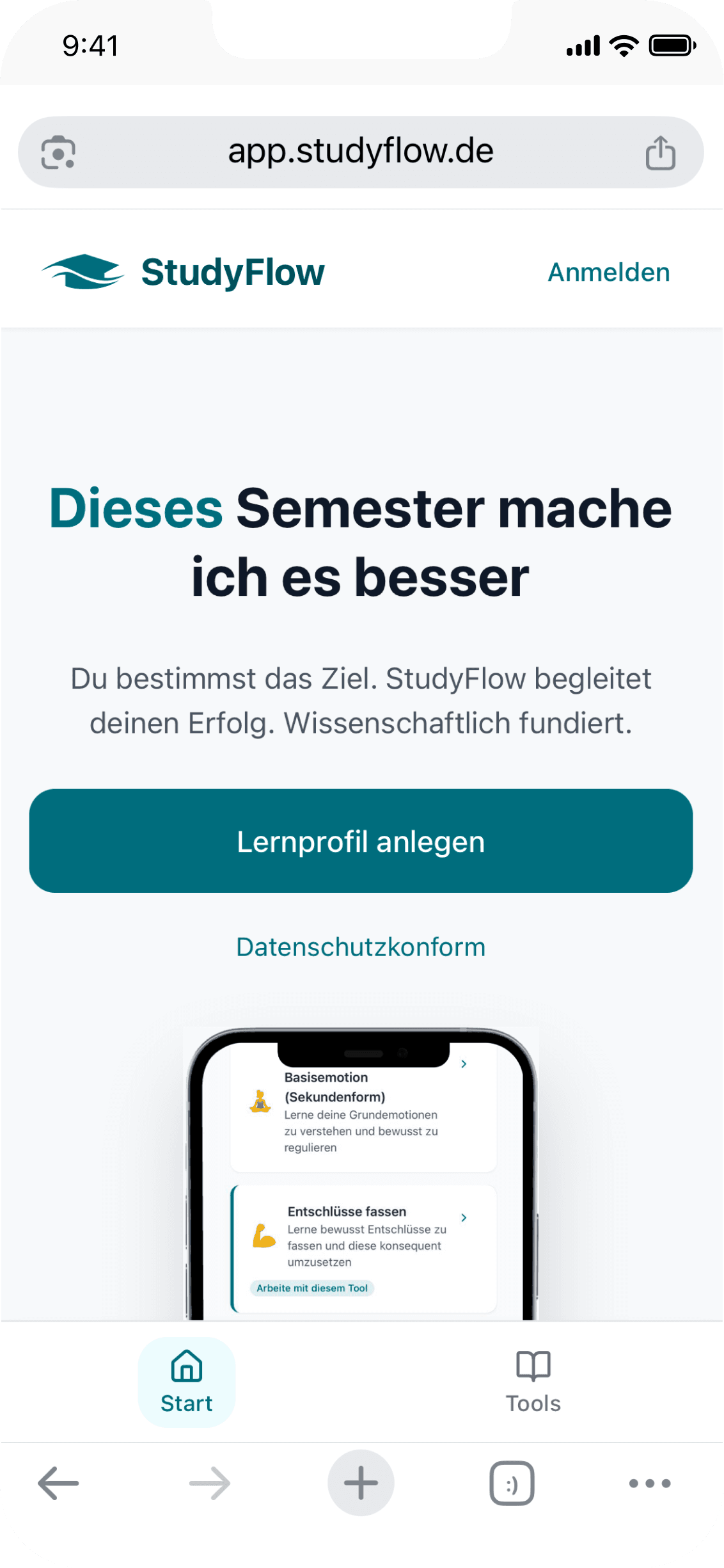

The redesigned landing page reduced cognitive load, prioritized one clear action, and added a privacy hint to remove the barrier to sign-ups.

Design

1

Task-driven dashboard reducing cognitive load

2

Progressive tool disclosure preventing overwhelm

3

In-app execution connecting planning to action

Reflection

UX is one layer, not the solution

Working through the Lean PMF Pyramid taught me where UX fits in the bigger picture. When a product struggles with retention,the answer isn't always better UX. Sometimes the gap is in the layers below: target customer, underserved needs, value proposition, feature set. That's what StudyFlow taught me to check first.

"Positive feedback" can be misleading

"The team pointed to good ratings from 10 users. But 31 had already churned silently. I learned to look at who isn't talking, not just who is.

Constraints force clarity

I couldn't redesign everything. Prioritizing taught me to distinguish between "interesting to fix" and "critical to fix."

Advocacy is part of the process

Advocating clearly for a recommendation while remaining constructive when a different direction is taken is a skill this project required, and one I will carry into every client engagement.

Test if progressive tool disclosure reduces overwhelm

Measure task completion: in-app planning vs. external tools

Expand concept wireframes into a testable prototype to validate whether this direction would better serve user needs